Project Spectrum: using generative AI to enhance inflation nowcasting

Updated 17 February 2026

The availability of web-scraped and scanner data sets provides central banks with unprecedented access to real-time data on individual product prices. However, to use this data for inflation nowcasting and forecasting, analysts need to classify products according to statistical conventions. In the absence of reliable, scalable classification methods, inflation analysts are flooded with data but lack actionable insight.

Product classification at the scale of web-scraped data represents a major challenge. Manually processing this amount of data is not feasible. Classification using large language models (LLM) is promising, but with current models, the processing time and cost become prohibitively high.

Project Spectrum used the ECB's "Daily Price Dataset" (DPD), which contains billions of price-product daily observations for 34 million unique products. At current rates, classifying this data set using GPT-5 would take over six months of computing time at a cost exceeding EUR 0.5 million.

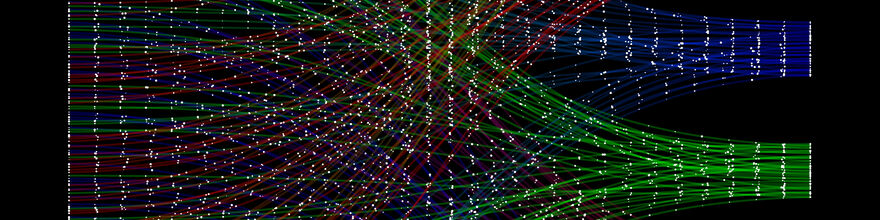

Project Spectrum – a collaboration between the Bank for International Settlements (BIS), the Deutsche Bundesbank and the European Central Bank – explored an alternative approach where Artificial intelligence (AI) was used only to transform product descriptions into high-dimensional text embeddings. These were then classified into product categories using classic machine learning algorithms. Text embedding is a foundational AI technique used by many natural language processing applications. This method achieved accuracy levels comparable to LLM prompting, but at a fraction of the costs: the entire DPD dataset was classified in just five days for approximately EUR 1,500.

- Report: Project Spectrum: using generative AI to enhance inflation nowcasting (17 February 2026)

Besides classifying all records in the current DPD data set, the project has developed this solution as a pipeline that can classify new products as they are added to DPD. In addition, to ensure continuous improvement, an iterative algorithm was implemented to gradually expand the reference data set. By selectively adding manually labelled data to the reference and validation sets, this algorithm systematically refines the classification logic, enhances overall predictive accuracy and adapts to a changing product range.

The experimental results focus on data collected in the euro area; however, the approach is globally applicable thanks to the multilingual capabilities of AI models. It has been shown in recent BIS research (see Working Paper No. 1240), that, properly curated, these vast datasets can deepen our understanding of price setting and inflation dynamics in a wide set of countries.

By turning raw, fragmented product description into structured data, Project Spectrum equips analysts and policymakers with timely, detailed insights into price developments. Ultimately, the project contributes to an emerging new generation of AI-powered analysis, where data abundance can be translated more easily into actionable economic understanding.